CoEx

Correlate-and-Excite: Real-Time Stereo Matching via Guided Cost Volume Excitation

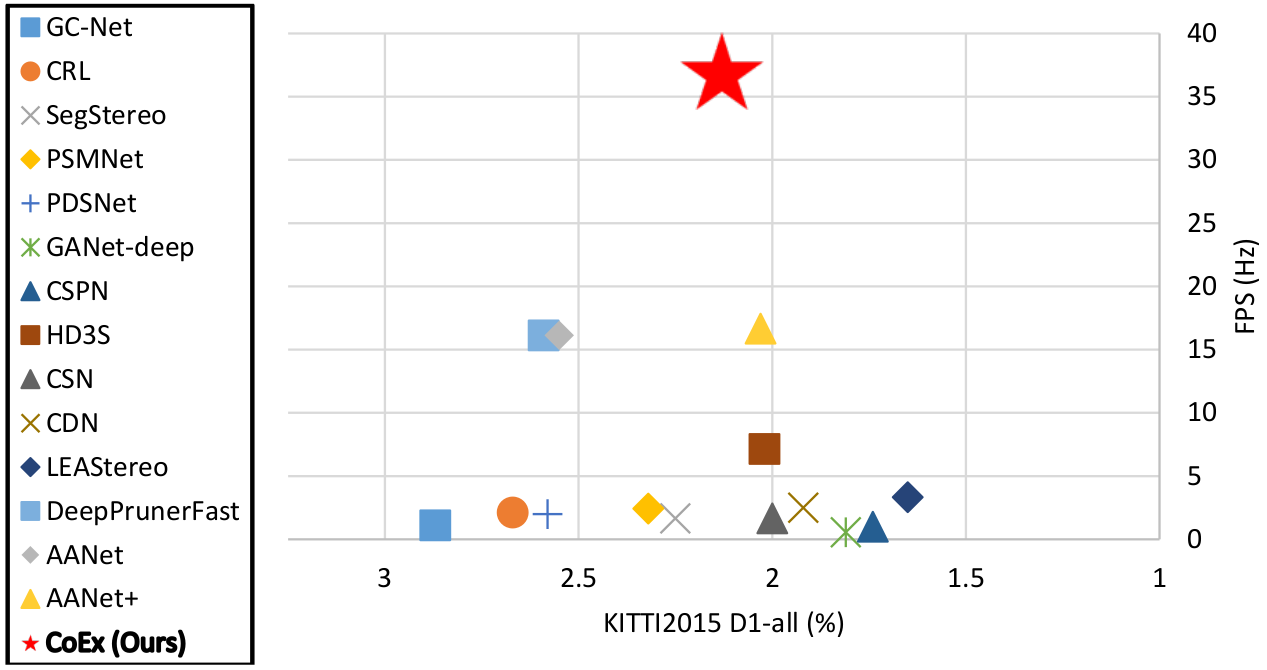

D1-all% error on KITTI stereo 2015 leaderboard vs. frame rate. Our proposed method CoEx, shown in the red star, achieve competitive performance compared to other state-of-the-art models while also being real-time.

Abstract

Volumetric deep learning approach towards stereo matching aggregates a cost volume computed from input left and right images using 3D convolutions. Recent works showed that utilization of extracted image features and a spatially varying cost volume aggregation complements 3D convolutions. However, existing methods with spatially varying operations are complex, cost considerable computation time, and cause memory consumption to increase. In this work, we construct Guided Cost volume Excitation (GCE) and show that simple channel excitation of cost volume guided by image can improve performance considerably. Moreover, we propose a novel method of using top-k selection prior to soft-argmin disparity regression for computing the final disparity estimate. Combining our novel contributions, we present an end-to-end network that we call Correlate-and-Excite (CoEx). Extensive experiments of our model on the SceneFlow, KITTI 2012, and KITTI 2015 datasets demonstrate the effectiveness and efficiency of our model and show that our model outperforms other speed-based algorithms while also being competitive to other state-of-the-art algorithms

Contributions

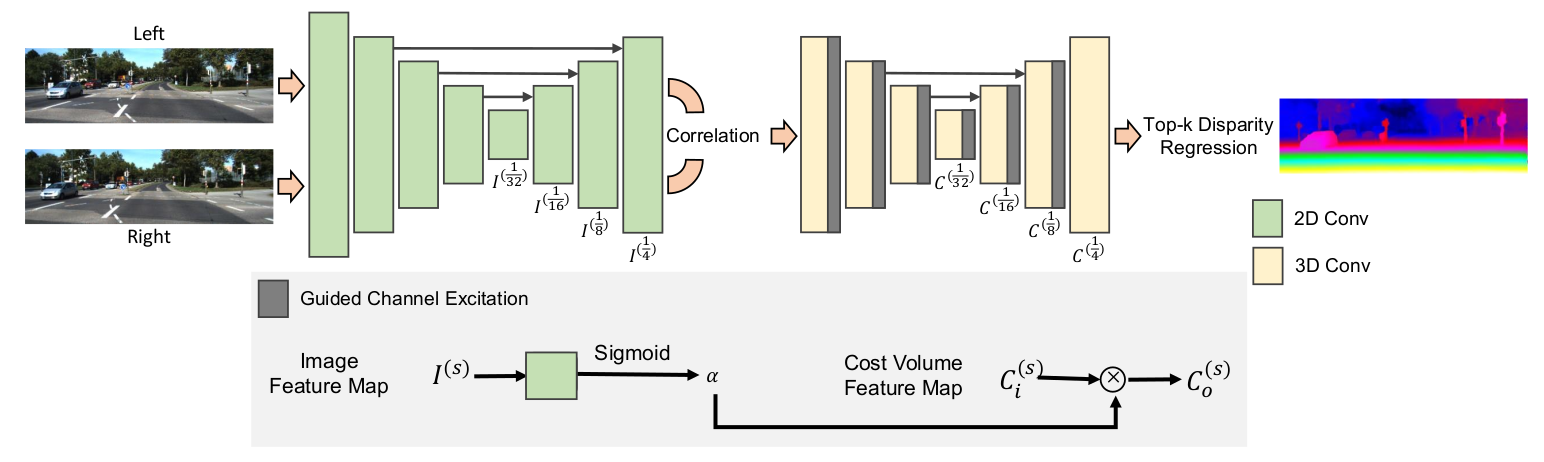

- We present Guided Cost volume Excitation (GCE) to utilize extracted feature map from image as guidance for cost aggregation to improve performance.

- We propose a new method of disparity regression in place of soft-argmax(argmin) to compute disparity from the top-k matching cost values and show that it reliably improves performance.

- Through these methods, we build a real-time stereo matching network CoEx, that outperforms other speedoriented methods and shows its competitiveness when compared to state-of-the-art models.

CoEx overall architecture

GCE

GCE excites stereo cost volume using weights extracted from image features.

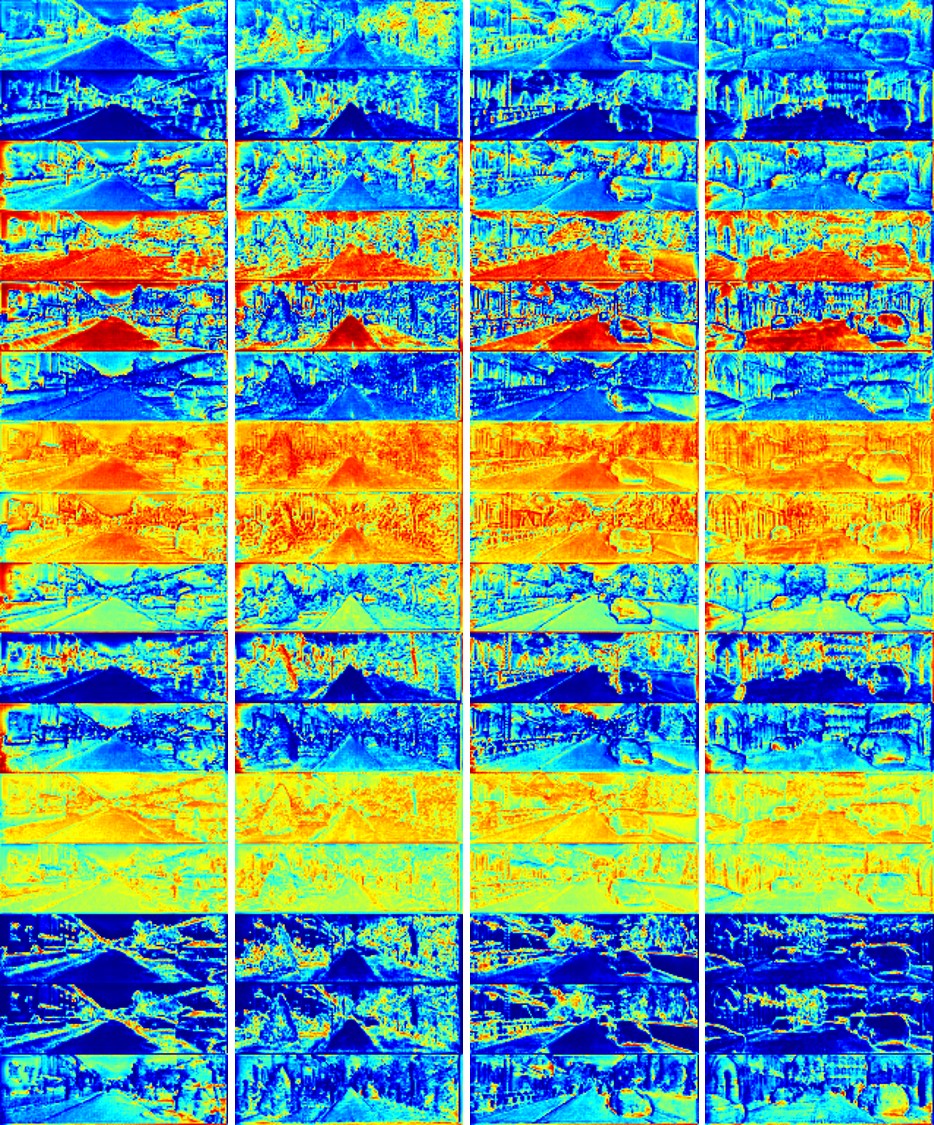

Computed excitation weights in one of the GCE layers.

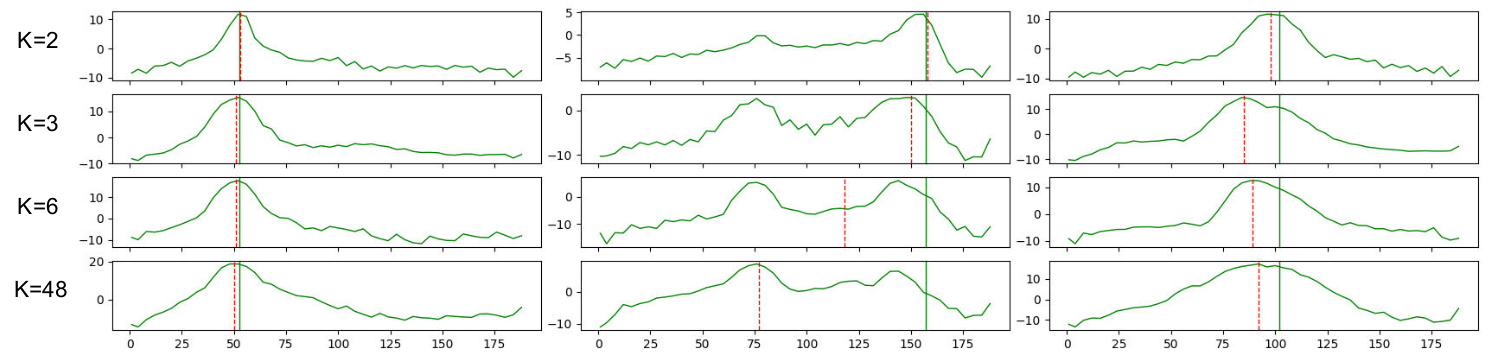

Top-k soft-argmin disparity regression

Instead of computing disparity by soft-argmin using the whole cost volume, only the top-k relevant values are used.

Ground truth disparity is represented by vertical solid green line. Predicted disparity is vertical dashed red line. k=2 makes the closest regression estimates to the ground truth.

Top-48

Top-2

Top-2

Demo

Real-time stereo matching

Stereo 3D reconstruction

Reconstructed 3d point cloud computed from the predicted stereo disparity map.

Application - visual odometry and point cloud mapping

Application test using the computed stereo depth to perform visual odometry and point cloud mapping.

Related works

[1] J.-R. Chang and Y.-S. Chen, “Pyramid stereo matching network,” in

CVPR, 2018

[2] F. Zhang, V. Prisacariu, R. Yang, and P. H. Torr, “Ga-net: Guided

aggregation net for end-to-end stereo matching,” in CVPR, 2019.